Forget Twitter.

Imagine signing up for regular feeds of Kim Kardashian's emotions.

Mark Billinghurst of Canterbury University's Human Interface Technology Laboratory says it’s a possibility.

Professor Mark Billinghurst at the Human Interface Technology Lab. Photo RNZ/ Katy Gosset.

His team is part of an interdisciplinary group working as part of Samsung's Think Tank to develop further applications for wearable technology such as Google Glass.

Professor Billinghurst says, while other research has looked at getting computers to respond to emotion, his team will be the first to use the computer to help share emotions between people.

PhD Student Sudhanshu Ayyagari wearing the Google Glass display. Photo RNZ/Katy Gosset.

A PhD Student working on the project, Sudhanshu Ayyagari, has been trialling the technology by wearing the Google Glass display on his head while his fingers are clipped to a number of sensors.

Professor Billinghurst says the sensors show his heart rate and oxygen saturation levels and also give an indication of sweat production.

"Then we can look at the raw data from that and we can understand what emotions he's feeling and then convey that to a remote person."

Emotions vs Emoticons

Sudhanshu Ayyagari says its similar technology to that used in lie detectors and the sensor feedback combines to give an overall picture of how people are feeling.

He says the data can even be used in place of an emoticon.

"Let’s say you've got some good news which you need to share with someone in your life. Rather than sending just an emoticon you can even send whatever you've recorded."

And he says the technology might also be useful in helping to understand the emotions of people who have autism or dementia and who may have difficulty expressing themselves.

Explaining Emotions

Mark Billinghurst says there's still work to be done in deciding how the emotions will be represented to the remote user.

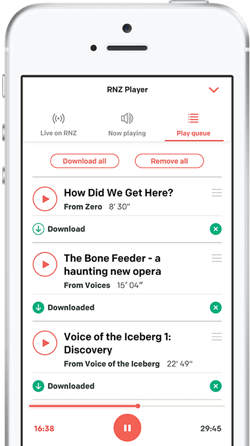

The emotional reading on the screen is "Happy" - a combination of the sensor results. Photo RNZ/Katy Gosset.

Currently the screen shows the sensor results and an emotion written in text such as "Happy".

But he says the emotion could instead be conveyed using a sound cue, like the music used to indicate happy or suspense- filled moments in a film.

Alternatively he says the screen colours could be changed.

"So, instead of showing the video with the normal realistic colours, you could make it look red if the person's looking angry or maybe more yellow or orangey if they're looking happy.

"So you can really look through the world through rose-coloured glasses."

Professor Billinghurst says, initially, it’s likely the emotion will be shared by people who already have a connection but that might change down the track.

"There may be opportunities or businesses where people will advertise, you know, "You can subscribe to Kim Kardashian's emotion feed and you pay $100 a month and you can see exactly how she's feeling any particular time."

"The Augmented Human"

The team has just been awarded $870,000 in Marsden Funding to progress their work and part of that will be in the emerging research area of "the augmented human".

Professor Billinghurst says the group wants to build models of human cognition that combine the computer and the human together into a single model.

"In that way we can see how the technology will impact human cognitive processes over time and also how we can build systems that are modelled on human cognitive processes to work the best with the human brain."

He says if people have wearable computers that are capturing all their movements, then that technology can serve as part of their long-term memory.

"So if you do lose your car keys, you should be able to query your computer and it will tell you where the car keys were placed, so, certainly, computers will be able to help in that case."